ISP Website Blocks: How Bad are the UK’s Web Filters?

A Top10VPN and Open Rights Group study of UK ISP website blocks reveals that hundreds of charity, school and social support websites across the nation are among thousands of sites wrongfully blocked by overzealous content filters.

Summary: UK ISP Website Blocks in Numbers

- 35 million websites tested and over 760,000 blocked by ISP content filters

- Over 1,300 website blocks have been successfully reversed

- Over 400 UK charity, school and social support websites blocked with 120 sites still affected

- Over one quarter (27.6%) of website unblock requests to ISPs from 2018 remain unresolved

Table of Contents

Introduction

We have partnered with leading digital rights advocates Open Rights Group (ORG) to investigate the extent and impact of overblocking of websites by UK mobile and broadband Internet Service Provider (ISP) adult online content filters.

Our investigation found the crude approach to adult internet content filtering adopted by the ISPs means in-need adults are being prevented from accessing vital information and support. Website owners are also affected: charities and support services are being stifled in their missions, while small business owners are losing customers and suffering damage to their reputations.

This comes as a result of internet filters blocking hundreds of websites for charities, schools, the LGBTQ+ community, and services offering support for mental health, addiction and survivors of sexual assault and domestic violence.

We also looked at how ISPs deal with requests to unblock websites that have been incorrectly filtered.

Our findings reveal a fundamentally flawed, unfair and inconsistent system that’s in dire need of reform.

Since 2014 and with the help of volunteers, ORG has operated Blocked.org.uk, which hosts an online tool that allows anyone to check whether a site is being blocked and report any that shouldn’t be to the ISPs blocking them.

ORG has collected results of over 35 million website tests and indexed over 760,000 blocked unique domains since then, with 90% of that data collected since March 2017.

This investigation has culminated in our partnership with ORG to publish this new report, Collateral Damage in the War Against Online Harms, the most comprehensive study of UK website blocks to date.

The report identifies over 8,000 internet domains have been blocked by UK ISPs in the last two years across just a handful of categories that would not appear to be harmful to children.

Along with the third-sector and related sites already mentioned, websites for builders, drainage companies, wedding services, photographers and religious groups are revealed to be disproportionately affected, with over 3,300 of those domains still blocked by at least one ISP.

ISP adult online content filters are switched on by default in the UK and consumers must actively opt out if they want unfiltered internet access.

As a result there are 3.7 million households with active online content filters plus mobile phone users who haven’t opted out of the default internet filters.

Filtering is outsourced by ISPs to third-party companies, who provide out-of-the-box solutions that appear to rely largely on basic keywords to identify harmful online content.

Unfortunately, not only are these filters totally opaque but also very crude. For example, drainage company websites are often blocked – apparently because they advertise “unblocking” services, misidentifying them as online privacy tools that are deemed harmful content by ISPs.

The indiscriminate nature of these filters is underlined by the fact that fewer than 5% of cases of previously blocked websites have failed to be overturned since 2017. Over the same period, 1,300 website blocks were reversed, suggesting many more websites have been, and remain, incorrectly censored.

The issue is compounded by two key factors:

- Businesses and charities are rarely aware their websites are being blocked unless their own ISP is also filtering them

- ISP response rates to website unblock requests are unacceptably poor

Almost three in 10 (27.6%) unblock requests to ISPs from 2018 are still unresolved, with TalkTalk, Sky and Virgin Media the worst offenders.

The automated filtering systems are also completely opaque and highly inconsistent across providers, making it very difficult to predict what websites will be blocked and by whom.

This has created a lose-lose situation.

We urge the Government to adopt the recommendations in our report to solve this self-inflicted harm.

Content misclassification

Due to the automated nature of online content filters, it’s possible to identify recurring patterns of content misclassification.

Certain categories of website suffer disproportionately as a result of content filtering. However, due to the complete lack of transparency from ISPs over how these internet filters work, it’s not possible to state with any certainty why this might be.

Nevertheless, it’s clear that many website blocks appear to be the results of blunt filtering triggered by the detection of blacklisted words with no regard for context.

However, it’s possible that websites may also be classified according to their hosting provider.

This might result in all sites sharing a particular IP address being considered as likely to be pornography, for example, even when the sites are in fact radically different.

The following categories of site indicate some of the most egregious website blocks we have discovered.

These are numbers of UK sites only, please see the full report (Tables A.1 and A.3) and for total numbers of sites blocked, which are higher as they include US-based sites blocked by UK ISPs.

Note: “Current ISP Blocks” below refers to the total number of active ISP website blocks detected for that category as domains may be blocked by more than one provider.

| Webite category | Blocked domains | Domains still blocked | Current ISP blocks |

| Addiction, substance abuse support | 35 | 14 | 48 |

| Charities and non-profits | 91 | 17 | 24 |

| Counselling, support, and mental health | 104 | 70 | 177 |

| Domestic violence and sexual abuse support | 7 | 3 | 11 |

| LGBTQ+ | 27 | 7 | 25 |

| Schools | 143 | 13 | 28 |

| Building and supplies | 67 | 26 | 64 |

| CBD-related and hemp products | 307 | 220 | 1081 |

| Drainage and drain unblocking | 107 | 3 | 3 |

| Photography | 1858 | 732 | 2109 |

| Religious | 137 | 54 | 147 |

| Wedding services | 4506 | 1718 | 3739 |

| VPN-related | 404 | 345 | 1719 |

It’s an unfortunate irony that internet content filters designed to protect vulnerable people from online harms are also preventing in-need adults from accessing information and support.

Websites offering support for survivors of domestic violence and sexual abuse understandably contain frequent uses of words which could be interpreted as sexual or pornographic by a crude keyword filter.

Similarly, websites for counselling and mental health support may contain references to suicide, self-harm and sex, while addiction support services will naturally refer to drugs and alcohol.

The damage caused by this type of filtering is potentially very large, and ISPs should therefore take proactive steps to ensure that their filtering systems exempt sites which fit into these categories.

The blocking of school websites is particularly illustrative of the low-effort nature of these filters. At least 34 unique sites with a .sch.uk top level domain that have been filtered during the lifetime of the Blocked project. These domains cannot be registered privately and are only available to schools in the United Kingdom.

It would be trivial to exempt .sch.uk domains from blocks and yet this hasn’t happened.

See the full report for further analysis of these categories. The report also covers the following problematic categories of blocks:

- Alcohol-related (non-sales) websites

- The “Scunthorpe Problem”

- CDNs, APIs, and image hosting services

- Technical back-end sites

- Pre-launch sites

- Products already subject to age restrictions

- VPN services

- Remote access software

Unblock Request Findings

Users of the Blocked tool are able to submit requests to ISPs for websites which they have blocked to be reconsidered and potentially reclassified.

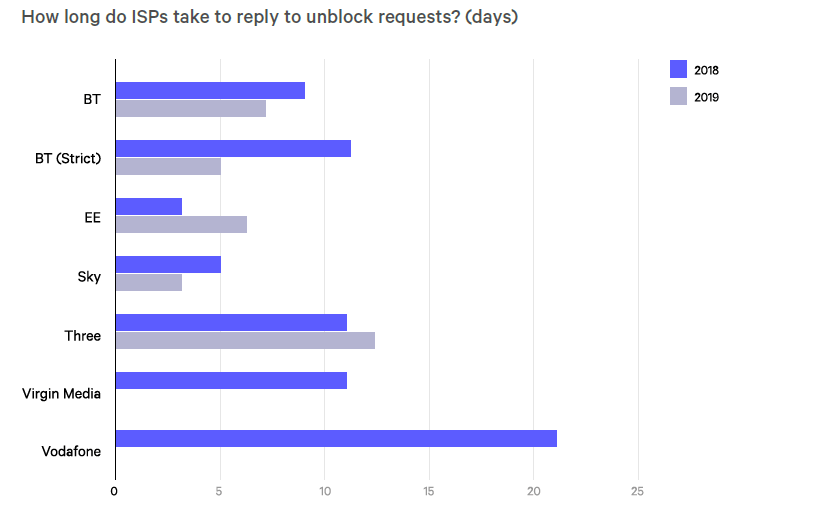

It took an average of 8 days for ISPs to respond to unblock requests in 2018. Vodafone were by far the slowest with an average 21-day response time.

Time taken for ISPs to reply to unblock requests, in days.

The overblocking of websites causes harm to website owners and users of those websites. It’s therefore vital that ISPs promptly acknowledge and reply to unblock requests.

In the report, we suggest that users reporting wrongful website blocks should expect to receive a reply within a fixed time frame – ideally no more than 48 hours.

The data above demonstrates that ISPs have room to improve the rate at which they reply.

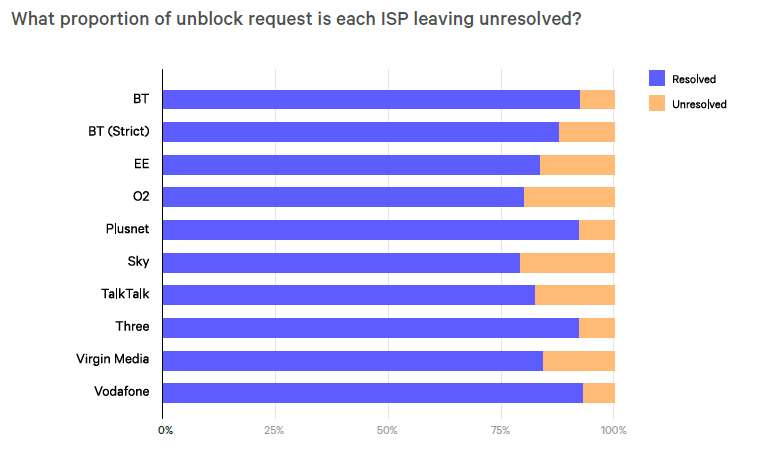

Even more problematic is the proportion of website unblock requests that go unanswered.

We found that almost three in ten (27.6%) website unblock requests submitted in 2018 were still unresolved in March 2019. Over half of these outstanding cases relate to websites that do not fall into any of the categories of content that the ISP blocked by policy, and should have been unblocked immediately upon request.

Our data suggests that some website unblock requests are simply not dealt with at all by ISPs

The following table shows which ISPs are the best and worst performers in this regard.

Status of website unblock requests forwarded to ISPs in 2018, as of March 2019.

Further analysis of unblock requests can be found in the full report, including an assessment of the appeals process – or lack thereof for fixed-line ISP blocks.

Background

Why are websites being blocked in the UK?

ISPs in the UK have applied filters to internet connections since 2011 in an effort to block children from accessing websites which host online content considered inappropriate.

This push was informally backed by the Government, who wanted to show that the UK was at the forefront of protecting children from online content.

The Government announced in 2013 that the four main ISPs in the UK; TalkTalk, Virgin Media, Sky and BT, had agreed to install ‘family friendly’ content filters and to promote them to their existing and new customers.

Why conduct this research?

There are a number of key reasons prompting this research:

- The lack of evidence that internet filters prevent children from seeing adult content or keep them safe online

- Private companies are making questionable choices about what is and isn’t acceptable for under 18s, with no oversight or consideration of actual harms to young people

- Following the passing of an EU regulation on net neutrality, the position seems clear that internet filtering by ISPs is prohibited.

- Filters are a flawed technical solution to a social problem

Policy Context

What problem are content filters trying to solve?

Fears about the corrosive influence of internet pornography on young people, along with potential harms caused by online content promoting self-harm, extremism and anorexia have been at the centre of media debate about the protection of young people.

However as our report shows content filters take in a much wider range of subject matter, including alcohol, drugs, sex, religion, and politics. Importantly, these internet filters affect adults as well as children.

Assessing the harms of adult content

While it’s beyond our remit to assess the impact of adult material on children, in the report we do urge policy makers to:

- Take an evidence-based approach

- Frame the debate correctly

- Include young people’s voices

- Not solely focus on risk

- Not focus solely on technology

- Encourage active parenting

Filters as a solution

Network-level filters have been promoted as a simple way of preventing children from seeing adult content. Parents do not need any technical expertise to activate them.

Former Prime Minister David Cameron said filters were intended to provide “One click to protect your whole home and to keep your children safe.”

As our report shows, this simplistic view is misleading and potentially counterproductive.

Internet filters – whether applied at home or in schools – have now become central to government policies for children’s safety online.

Mobile phone filters are switched on by default by a number of providers, including EE, Telefonica (O2), Three and Vodafone. Mobile phone customers generally have to prove they are over 18 if they want to switch internet filters off. Some networks require the submission of identification documents, such as a passport, in order to allow the filters to be disabled.

Filtering on fixed-line ISPs began to roll out in 2014. Network-level blocking means ISPs enable filters that apply to every device connected to a household network. They can only be switched on or off by the account holder. Most ISPs offer different levels of filtering and some allow customers to customise the categories they would like to be blocked.

Do filters prevent children from seeing harmful content?

It can be assumed that filters will limit very young children’s ability to see pornography, unless particularly adept at using technology.

However they are unable to protect children from individual pieces of online content on sites like Twitter, Facebook and YouTube.

There are multiple ways children may come to view inappropriate internet content using technology, web filters are unable to act as a panacea to protect children completely.

Some of the ways that older children may be able to see pornography or other banned content include:

- Friends

- Proxy sites

- Tor

- File-sharing services

- Free VPNs

- Data storage devices

- Sexting

Harms of overblocking

Even if only a small proportion of websites are incorrectly blocked, there can still be significant consequences. As our report indicates, many websites performing socially important functions are being incorrectly blocked.

Vulnerable people in crisis are being prevented from accessing the information and supports they need. This is not an acceptable state of affairs.

Small businesses also suffer lost custom and reputational damage. They are also disproportionately affected compared to larger businesses.

A number of specialised wine merchants, for example, have experienced their sites being blocked by ISP filters. We do not see the same outcome for supermarkets selling alcohol and stocking the same products. This is despite the fact that both pose the same potential harm to minors.

Legal basis for content filtering

European net neutrality regulations appear to render unlawful adult online content filtering in its current form despite attempts to change UK law in order to sidestep the European Union rules.

There are questions over whether content filtering in its current form is legal

There has never been a legal obligation for companies to provide filters – the companies voluntarily agreed to do so.

The EU agreed regulations in 2015 that state ISPs “should treat all traffic equally, without discrimination, restriction or interference, independently of its sender or receiver, content, application or service, or terminal equipment”.

While these rules naturally do allow for exemptions, the UK blocking arrangements do not meet the criteria to be exempt.

In our report, we call upon on Ofcom to clarify the legal status and basis for adult content filtering, and provide guidance to companies who might be in breach of the law.

Error correction

Correcting errors made by internet content filters is not necessarily easy, and was not prioritised by the Government alongside introduction of web filters.

O2 are the only provider to have a URL checker, for example. This tool ended up being disabled for over a year, beginning in late 2013, following its use by journalists.

Each ISP provides an email address for reports of overblocking, but ISPs do not accept bulk or automated enquiries.

ORG considers these solutions inadequate so it runs its own system, Blocked, which is reliant on donations and support from partners, such as Top10VPN.

Recommendations

- Opt-in internet filters rather than opt-out

- Harm-based evaluation of online content with greater transparency on how websites are blocked.

- Inform websites that they are blocked and provide an opportunity for appeal

- Better processes for identifying and requesting unblocking of websites

- Appeals: fixed-line ISPs should implement an appeals process while mobile network operators should better communicate theirs

- Device level filtering rather than network level

- Ofcom must clarify the legal situation

- Conduct independent research into the risks of online content

Methodology

35 million sites tested using tool located at Blocked.org.uk since its launch in 2014 and over 760,000 blocked by ISP content filters. Testing massively expanded from March 2017 onwards and 90% of tests and results date from then. See appendices of full report for complete methodology.